Meta Massive Nvidia GPU Deal Signals AI Chip Arms Race Has Entered a Dangerous New Phase

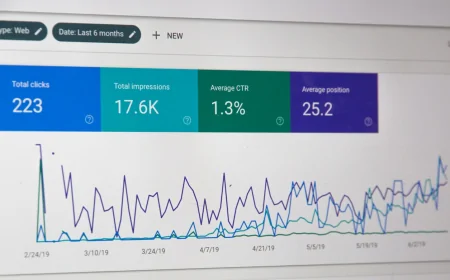

Meta has secured one of the largest single orders of Nvidia AI accelerators in corporate history as Big Tech races to control the physical hardware backbone of the AI economy with analysts warning that compute scarcity not ideas is now the defining bottleneck shaping the entire industry future.

Meta GPU Blitz: Why Silicon Valley Is Betting Its Future on Chips It Cannot Get Enough Of

Mark Zuckerberg wants more chips. A lot more. According to multiple reports confirmed on Tuesday, Meta has locked in one of the largest single orders of Nvidia AI accelerators in corporate history, a deal spanning hundreds of thousands of the company latest generation GPUs and representing a capital commitment running into the tens of billions of dollars.

The move is not a surprise, exactly. But its scale is. And it tells a story about where the AI industry has arrived in early 2026: a place where the competition is no longer primarily about algorithms, research talent, or even data. It is about who controls the physical hardware that makes AI run.

The Logic Behind the Spending

Meta latest AI strategy is built around two pillars: its own large language models competing directly with OpenAI and Google Gemini, and the AI features embedded throughout its product suite including Facebook, Instagram, WhatsApp, and the Ray-Ban Meta smart glasses. All of it requires enormous compute capacity, and Nvidia H200 and upcoming B200 Blackwell chips are the current gold standard.

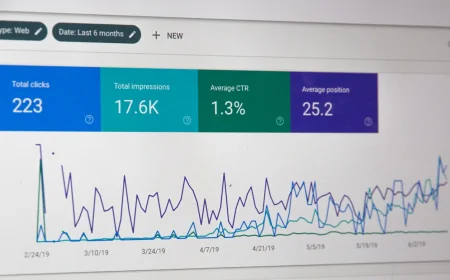

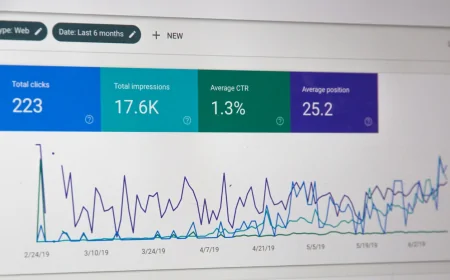

The problem is that demand for these chips is dramatically outstripping supply. Nvidia own manufacturing partner TSMC is running fabs at or near maximum capacity. Lead times for GPU orders have stretched to 12 months or more. Companies that do not lock in supply now risk falling behind competitors who do.

According to semiconductor analyst Dr. Kevin Chen at Bernstein Research, what Meta is doing is essentially strategic stockpiling. They are not buying chips for workloads they have today. They are buying chips for workloads they expect to need 18 months from now, and they are betting they cannot afford to wait.

Can Anyone Catch Nvidia?

South Korea SK Hynix announced Tuesday it is ramping production of its HBM3E high-bandwidth memory by 40 percent in response to surging demand. HBM memory sits directly on AI chips and dramatically increases the speed at which models can process data. Without it, even the fastest GPUs underperform significantly.

The full picture of the AI hardware ecosystem is becoming clearer: a small number of companies control the bottlenecks, and those bottlenecks are physical. Land, power grids, cooling water, rare earth materials, specialized fabs. The AI race has quietly become an infrastructure race with geopolitical dimensions extending well beyond Silicon Valley.

Nvidia now commands over 85 percent of the AI accelerator market. Its CEO Jensen Huang has become one of the most closely watched figures in global technology. Whether hardware supremacy translates into lasting competitive advantage, or whether the value migrates up the stack to applications and interfaces, is the question that will define 2026.