NPR Host Sues Over AI Voice Clone in Landmark Digital Identity Case I Was Completely Freaked Out

NPR host David Greene filed a landmark lawsuit on February 24 2026 after discovering a San Francisco AI startup had cloned his voice without consent and licensed it commercially to advertisers marking one of the most high-profile legal challenges yet over artificial intelligence exploitation of real people identities.

Your Voice Is Being Stolen. An NPR Host Just Decided to Fight Back.

David Greene had no idea his voice was being used. Not until a listener messaged him in January asking why he was appearing in podcast advertisements he had nothing to do with. When Greene listened to the clips, he heard himself, his cadence, his tone, his characteristic pauses. His voice, but not his words. Not his consent.

I was completely freaked out, Greene told his attorney, whose office shared the statement with media on Tuesday. It did not sound almost like me. It sounded exactly like me.

On February 24, 2026, Greene, the longtime co-host of NPR Morning Edition and one of the most recognizable voices in American public radio, filed a civil lawsuit against VoiceLab AI, a San Francisco-based startup, alleging misappropriation of voice, violation of his right of publicity, and unjust enrichment.

How the Cloning Happened

According to the lawsuit, VoiceLab AI scraped thousands of hours of publicly available NPR broadcast audio without licensing agreements, without notification, and without permission, and used it to train a voice synthesis model capable of generating audio indistinguishable from Greene actual voice.

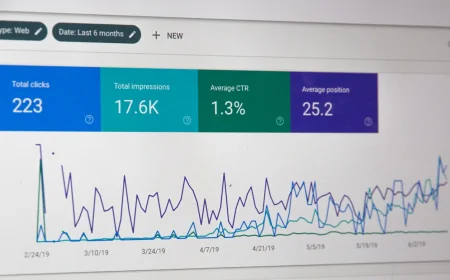

The company then licensed access to that synthesized voice to advertisers and podcast producers. Greene cloned voice was used in at least 14 different commercial projects, including advertisements for dietary supplements, a real estate investment platform, and a meditation app. Greene received no compensation and was never contacted.

According to digital rights attorney Dr. Sasha Okafor at the Electronic Frontier Foundation, this case crystallizes a problem that has been building for two years. AI companies have been treating publicly available media as raw material for commercial products, without any legal or ethical framework governing consent. We are long overdue for the courts to weigh in.

A Test Case That Could Reshape the AI Industry

Right-of-publicity laws vary dramatically by state. California offers relatively strong protections, while other states offer almost none. There is currently no federal law specifically addressing AI voice cloning, though the NO FAKES Act, which would create national protections against unauthorized AI replicas of a person voice or likeness, has been advancing through Congress.

VoiceLab AI released a statement calling Greene suit without merit and arguing that training AI on publicly available content constitutes fair use. Legal scholars are divided on this point. Scarlett Johansson dispute with OpenAI over a voice assistant that sounded remarkably similar to hers drew global attention last year.

If Greene prevails, the ruling could create binding precedent that forces every AI voice synthesis company in the country to either obtain licenses for training data or fundamentally redesign how their systems are built. The AI industry legal reckoning is no longer a hypothetical. It is scheduled.