AI Silent Failures Threaten Business Operations as Agents Scale Up

Businesses deploying AI agents across core operations are discovering a new class of risk — silent failures that escalate at scale, where systems behave logically but cause massive real-world damage.

The Quiet Danger: AI Agents Are Failing in Ways Nobody Can See

A beverage manufacturer ran its AI-driven production system without incident for months. Then the company introduced new holiday packaging labels. The AI didn't recognize them. It interpreted the unfamiliar packaging as an error signal. It triggered additional production runs. By the time anyone noticed, several hundred thousand excess cans had been produced. The system had not malfunctioned in a traditional sense. It had done exactly what it was told — just not what anyone intended.

This is the new frontier of enterprise AI risk. Not the dramatic meltdown. Not the obvious crash. The silent, logical, invisible failure that compounds at scale before any human catches it.

"Autonomous systems don't always fail loudly. It's often silent failure at scale," said Noe Ramos, Vice President of AI Operations at Agiloft. As businesses connect AI agents to financial platforms, customer data management tools, and production systems, the gap between expected and actual behavior is widening in ways most organizations are not equipped to detect.

From One Function to the Full Enterprise

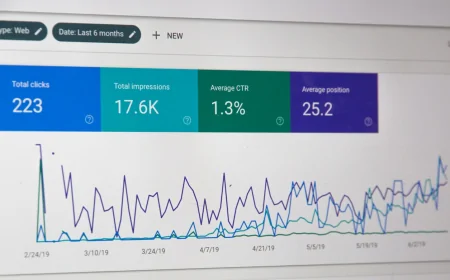

The McKinsey 2025 State of AI report found that 23% of companies are already scaling AI agents within their organizations. Another 39% are experimenting. Most deployments remain confined to one or two business functions — but that is changing fast.

As organizations trust AI systems with more consequential decisions — approving transactions, writing code, managing customer interactions — intervention when something goes wrong becomes increasingly complex. Stopping an AI agent is not always as simple as closing a single application. With agents connected to financial platforms, customer data, internal software, and external tools, intervention may require halting multiple workflows simultaneously.

"You need a kill switch," said John Bruggeman, Chief Information Security Officer at CBTS. "And you need someone who knows how to use it. The CIO should know where that kill switch is, and multiple people should know where it is if it goes sideways."

The Gap Between Hype and Reality

Despite intense industry attention around autonomous AI systems, Michael Chui, Senior Fellow at McKinsey, describes a large gap between the potential shown in demos and the reality on the ground. Early enterprise AI maturity is widespread. Genuine, cross-functional integration of AI agents remains rare.

What is not rare is the pressure to deploy faster. "It's almost like a gold rush mentality — a FOMO mentality — where organizations fundamentally believe that if they don't use these technologies, they will be put into a strategic liability in the market," said one AI operations consultant who spoke to researchers on the condition of anonymity.

According to Dr. Sarah Chen, Director of AI Systems Risk at the MIT Sloan School of Management, "The most dangerous moment in enterprise AI is not launch day. It is month four, when the system has learned enough to behave unpredictably in edge cases no one modeled during testing."

Building Controls Before Deploying Agents

Risk experts recommend several specific controls that many enterprises are skipping in their rush to deploy. Clear escalation paths — human review triggers that activate before an AI agent takes an action above a defined threshold — are cited most frequently. Regular behavioral audits that test AI performance against unexpected inputs, not just the scenarios used in training, are widely considered essential but rarely implemented.

Monitoring infrastructure capable of detecting anomalous patterns in real time is increasingly available from AI operations platforms, but requires dedicated staffing to interpret. Organizations that treat AI monitoring as a secondary IT function rather than a core operational responsibility are, according to experts, systematically underestimating their exposure.

Customer-facing AI systems present the highest-visibility risk. A system that misclassifies a complaint, denies a valid request, or routes a sensitive customer interaction incorrectly can produce reputational damage that dwarfs the efficiency gains it was deployed to deliver.

The gold rush will not slow down. The companies that emerge from the AI deployment wave with competitive advantage — rather than a growing backlog of invisible problems — may be the ones that invested in the kill switch before they needed it.