OpenAI Revises Pentagon Contract After Sam Altman Admits It Looked 'Opportunistic'

OpenAI revised its Pentagon contract after CEO Sam Altman publicly admitted the original agreement appeared 'opportunistic and sloppy,' tightening guardrails on surveillance and automated systems.

OpenAI Pulls Back Pentagon Contract After Altman Concedes Failures

OpenAI said Tuesday it was revising its contract with the U.S. Department of Defense after a week of intense public criticism that included its own CEO acknowledging the original agreement had serious problems. Sam Altman, appearing publicly on X to take questions after announcing the Pentagon deal, described the contract's initial presentation as looking "opportunistic and sloppy" — unusually direct language for a sitting CEO acknowledging a strategic misstep. The revisions are designed to tighten guardrails on domestic surveillance applications and clarify limits on how the technology could be used within government automated decision-making systems.

The controversy began when Anthropic — OpenAI's primary competitor in frontier AI development — publicly walked away from Pentagon contract negotiations, citing concerns about the agreement's terms around mass surveillance and automated lethal systems. OpenAI subsequently picked up the contract. Critics — including former DoD ethics advisors and civil liberties organisations — pointed out that the public record on what safeguards governed OpenAI's government deployment was thin, and that the speed of the deal suggested inadequate due diligence.

Altman's decision to engage directly with questions on X opened a public debate about what limits AI companies should set for themselves when working with intelligence and military clients. His repeated deferral to democratic accountability — "I very deeply believe in the democratic process, and that our elected leaders have the power" — satisfied some observers but frustrated those who argued that AI capabilities were advancing faster than the legal frameworks and oversight structures governing their use.

A Policy Gap With No Clear Owner

The OpenAI-Pentagon episode exposed a structural problem that neither the technology industry nor the U.S. government has yet resolved. AI capabilities, particularly in surveillance, data analysis and automated decision support, are now sufficiently powerful to change the nature of military operations and domestic law enforcement. The laws and oversight structures that govern intelligence collection, data use and automated decision-making have not kept pace.

Congressional oversight committees issued formal requests Tuesday for both OpenAI and the DoD to provide documentation of what safeguards were in place before the contract was signed. Several senators cited the episode as evidence that AI procurement by the U.S. government required mandatory congressional review and minimum transparency standards equivalent to those applied to conventional weapons systems.

The timing of the controversy — landing simultaneously with the U.S.-Israel war against Iran, which is generating its own questions about AI's role in target identification and strike planning — added intensity to the debate. Multiple defence analysts noted that the question of whether AI systems were involved in targeting decisions in Iran had not been answered publicly by the DoD.

Anthropic's Refusal Reshapes Its Market Position

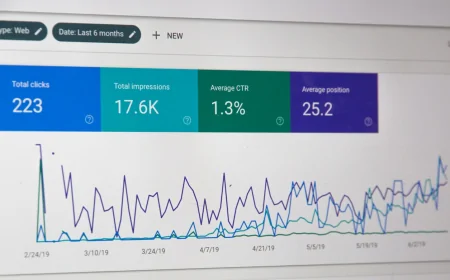

Anthropic's decision to walk away from the Pentagon deal, while immediately costly in revenue terms, appears to have generated unexpected commercial upside. Consumer trust data tracked by multiple analytics firms showed Anthropic's Claude downloads growing in the days following the controversy, while some ChatGPT users publicly announced switching platforms over the DoD deal. The pattern suggested that at least a portion of the AI user market was making purchasing decisions based on perceived ethical positioning — a dynamic that would have been considered marginal even 18 months ago.

According to Russell Brandom, technology policy editor at TechCrunch, "As OpenAI transitions from a wildly successful consumer startup into a piece of national security infrastructure, the company seems unequipped to manage its new responsibilities — and the Pentagon contract episode made that visible to everyone."

Whether OpenAI's revised contract language is sufficient to address the substantive concerns raised — or whether it represents a public relations adjustment without meaningful operational change — is a question the Congressional oversight process will now attempt to answer.