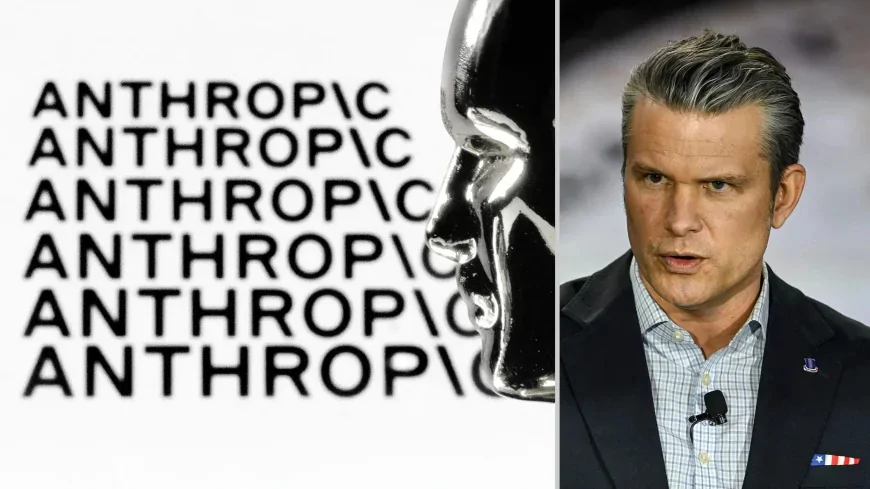

Anthropic Drops Core Safety Pledge Amid Pentagon Pressure Over Claude

Anthropic has scrapped its binding commitment to halt AI development if safety cannot be guaranteed, the same week the Pentagon threatened to pull its $200 million contract over Claude's use restrictions.

Anthropic Rewrites Its Safety Rules as Pentagon Ultimatum Looms

Anthropic built its reputation on a promise no other AI company made. In 2023, the company declared it would not train or deploy AI models capable of causing catastrophic harm unless safety measures could be guaranteed in advance. That pledge — the central pillar of its Responsible Scaling Policy — was presented as evidence that Anthropic was different from its rivals.

On Tuesday, the company rewrote the policy. The binding commitment to halt AI development if safety measures cannot keep pace with model capabilities has been removed. In its place sits a "Frontier Safety Roadmap" — described as a set of public goals that Anthropic will openly grade its own progress toward.

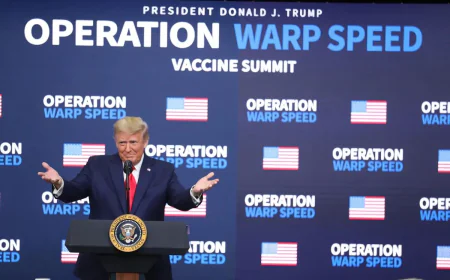

The change arrived the same week Defense Secretary Pete Hegseth delivered a direct ultimatum to Anthropic CEO Dario Amodei: roll back Claude's safety restrictions for military use by Friday, or face the cancellation of a $200 million Pentagon contract and designation as a supply chain risk.

What Changed — and Why Anthropic Says It Had to

Anthropic's chief science officer Jared Kaplan told TIME magazine that the company no longer believes unilateral pauses would benefit anyone. "We didn't really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments," Kaplan said.

The company argued that if a responsible developer stops training while competitors with weaker safeguards continue, the result is a less safe world — not a safer one. Rivals never adopted similar restrictions. The Trump administration has endorsed a largely hands-off approach to AI regulation. No federal AI law is on the horizon.

In place of hard tripwires, Anthropic will now publish detailed risk reports at regular intervals and commit to publicly stated — but nonbinding — safety goals. The company called this "the strongest to date on the level of public accountability and transparency."

The Pentagon Conflict: Two Red Lines Still Standing

The RSP change is officially separate from the Pentagon standoff, according to a source familiar with the matter. But the timing has drawn intense scrutiny.

Claude became the first AI model cleared to operate on US military classified networks after a Pentagon contract awarded in July last year. The acceptable use policy written into that contract contained two explicit restrictions: Claude could not be used for mass surveillance of American citizens, and it could not be used in fully autonomous weapons systems that make lethal decisions without human involvement.

Hegseth's ultimatum demanded those restrictions be removed. Anthropic rejected revised contract language sent by the Pentagon on Wednesday. "New language framed as compromise was paired with legalese that would allow those safeguards to be disregarded at will," the company said in a statement.

Industry Reaction and the Bigger Picture

The reaction from the AI safety community was swift and largely critical. Chris Painter, Director of METR, a nonprofit focused on AI risk evaluation, said the changes were understandable given competitive pressures but potentially an ill omen for the field.

According to Painter, "I like the emphasis on transparent risk reporting and publicly verifiable safety roadmaps — but removing the hard stop commitment changes the fundamental calculus of what responsible scaling means."

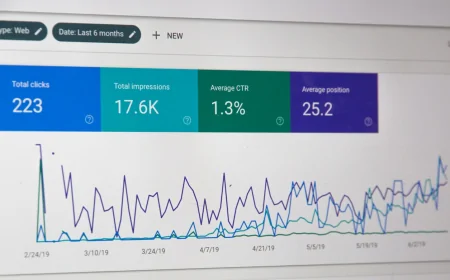

Anthropic's valuation reached $380 billion after a $30 billion funding round in February. Its annualized revenue is growing at 10 times per year. Claude Code, its software-writing tool, has attracted a devoted user base across enterprise customers. The company is no longer a scrappy safety-first startup — it is one of the most valuable private companies in the United States.

Whether the loosening of Anthropic's safety framework represents a pragmatic adaptation to a changed regulatory landscape — or the beginning of an erosion that safety advocates have long feared — may depend on choices the company makes in the months ahead, as both the Pentagon standoff and the race to the AI frontier continue to intensify.